- ai analysis of rnet says it scales to multiple cores automatically, but analyzing the wreq package, wreq does not. (rnet uses wreq).

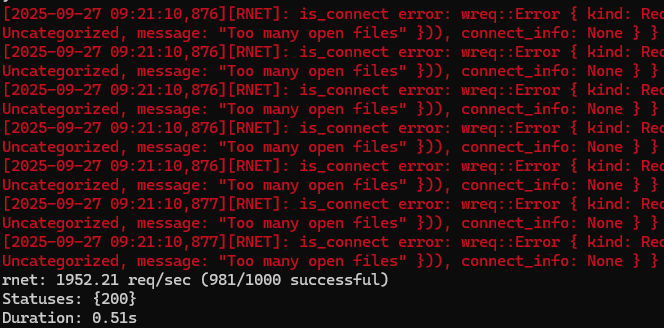

- rnet does not automatically increase the open file limit on linux (can't run bursty requests out the gate)

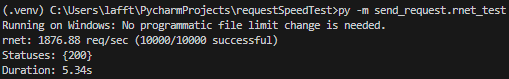

- first results shocking haha:

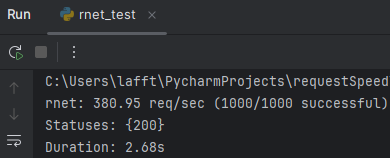

- for reference on my slow internet, 5 year old laptop:

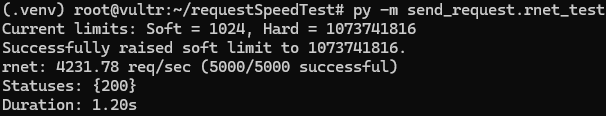

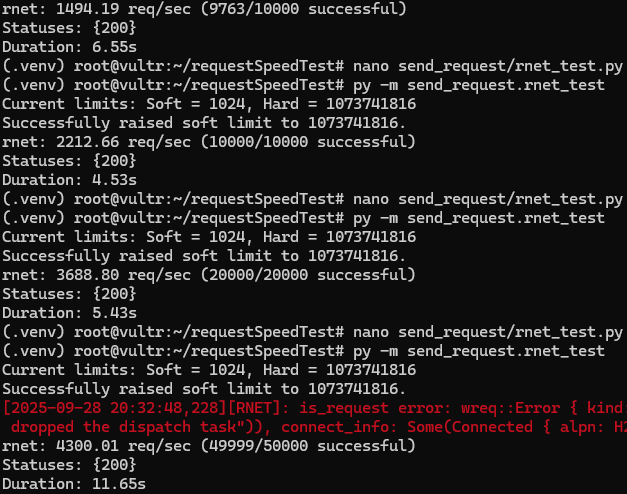

- and with a 5k request test on the server (after raising limits with

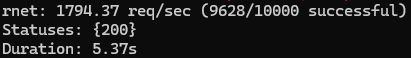

scripts/tune_server.shand rebooting): - tried 10k requests with 1 client:

- The typical error:

[2025-09-27 10:49:29,786][RNET]: is_request error: wreq::Error { kind: Request, uri: http://forevercode.online/, source: Error { kind: SendRequest, source: Some(crate::core::Error(IncompleteMessage)), connect_info: Some(Connected { alpn: None, is_proxied: false, extra: Some(Extra), poisoned: PoisonPill@0x782e79bd40f0 { poisoned: false } }) } } - I think it's just because we're trying to push so much performance out of 1 port/client. Will have a new client for every 5k requests and then we'll see.

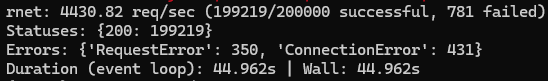

- New client every 500 requests, surprised me that windows did it with no errors lmfao:

- Linux still seeing a lot of errors, though this test is now using https on the receiving server.

- The errors Linux is seeing:

[2025-09-28 17:29:16,748][RNET]: is_connect error: wreq::Error { kind: Request, uri: https://forevercode.online/, source: Error { kind: Connect, source: Some(Failure(MidHandshakeSslStream { stream: SslStream { stream: PollEvented { io: Some(TcpStream { addr: 207.148.90.202:50096, peer: 149.28.192.18:443, fd: 29 }) }, ssl: Ssl { state: "TLS client read_server_hello", verify_result: Err(X509VerifyError { code: 65, error: "Invalid certificate verification context" }) } }, error: Error { code: SYSCALL (5), cause: None } })), connect_info: None } }and[2025-09-28 17:29:16,803][RNET]: is_request error: wreq::Error { kind: Request, uri: https://forevercode.online/, source: Error { kind: Canceled, source: Some(crate::core::Error(Canceled, "connection closed")), connect_info: Some(Connected { alpn: H2, is_proxied: false, extra: Some(Extra), poisoned: PoisonPill@0x7f36565eaad0 { poisoned: false } }) } }

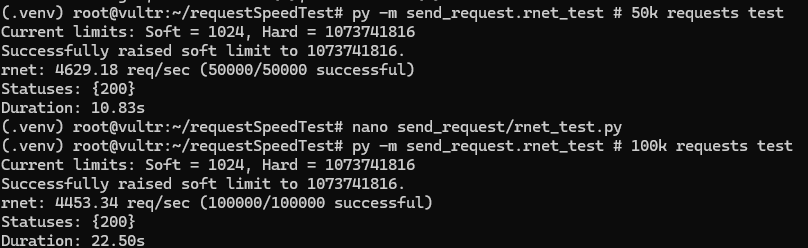

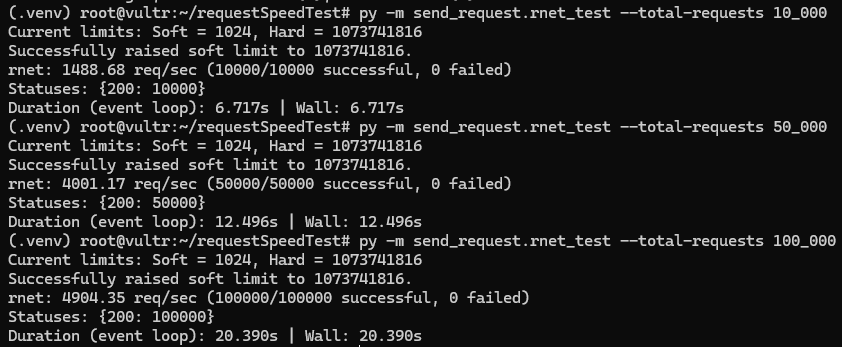

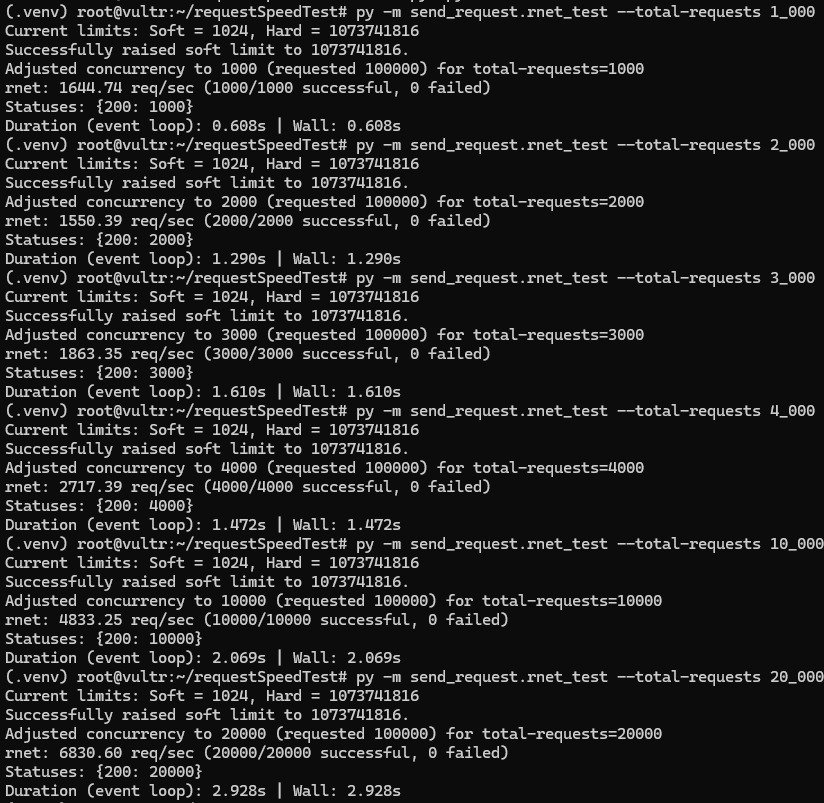

- New clients every 1k requests, with properly disabled tls:

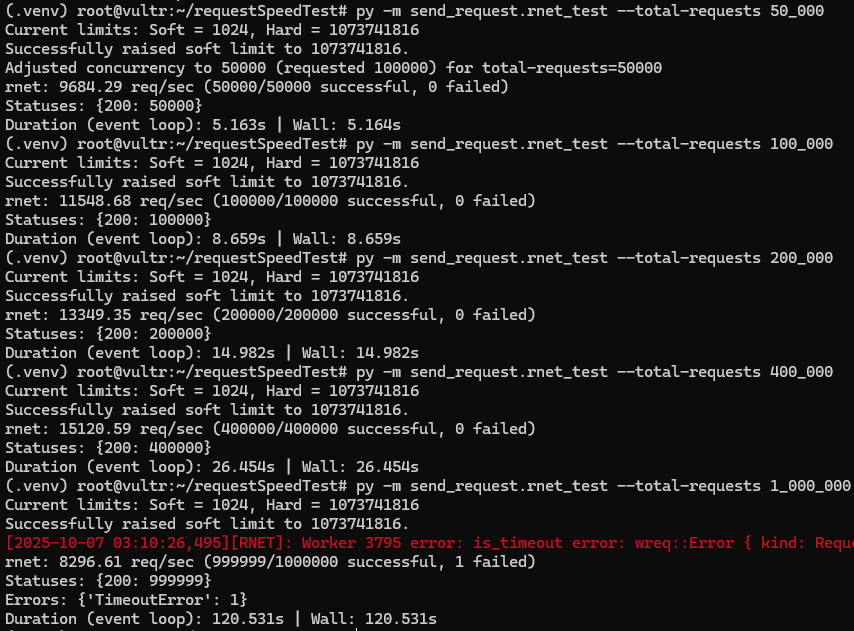

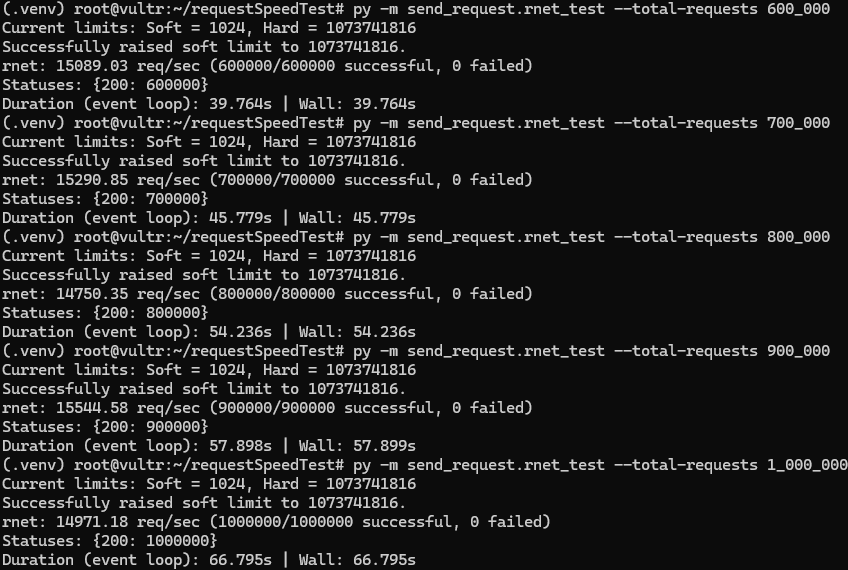

- Some higher tests, thinking i'm limited by CPU maybe? Will try with 4vcpu, this was on 2vpcu 4gb ram:

- GPT-5 Doing Numbers:

- BREKETE!

- Trying 8vcpu server.

- We hit 15k/sec! Project done technically haha.

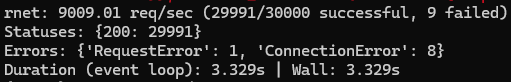

- Performance degraded at 1M requests submitted, trying stuff now to see what works.

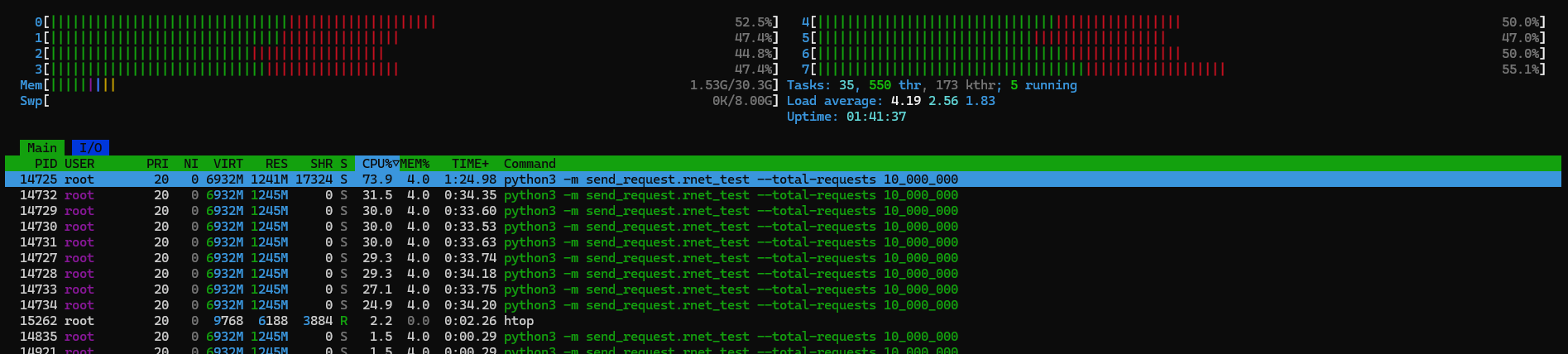

- Something curious, at 10M requests submitted, 8 cores aren't being used all the way up, 40-60% usage, sometimes flexing to 80%. The 16vcpu test will be interesting.

- Notice how low memory usage is? Crazy stuff.

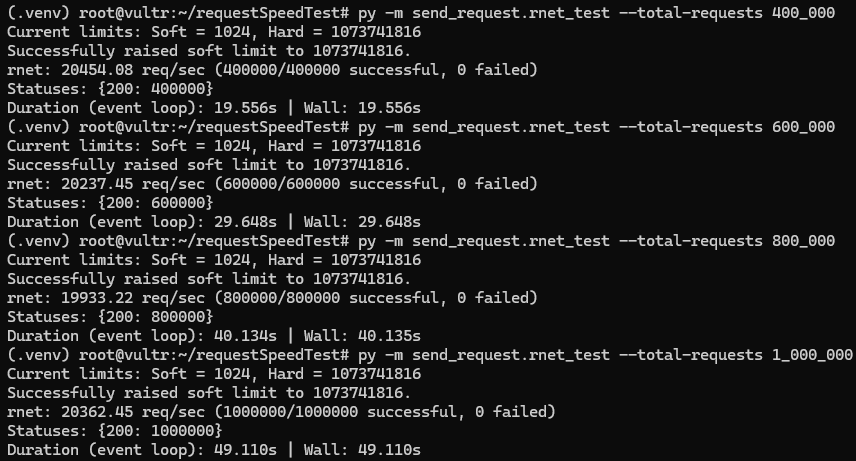

- Fixed some stuff and performance seems kinda stable at >400k requests submitted, will see how performance is at 10M requests.

- It's stable baby!

- Server is doing great tho damn! Now for the 16vcpu test.

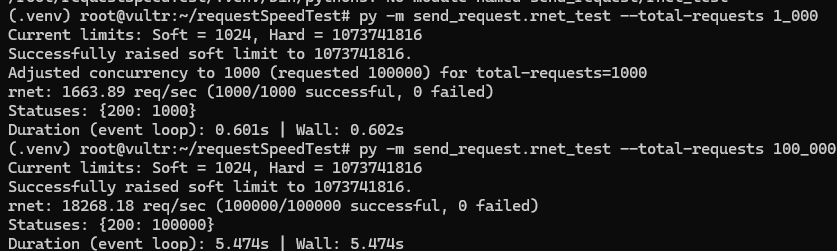

- 32vcpu server. Decided to go with dedicated servers this time, been using shared CPUs previously, might as well see what a (kinda) serious setup looks like.

- lmao what the fuck, 18k right away

- 20k RPS in the higher tiers.

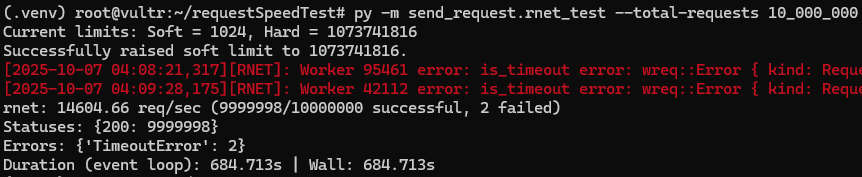

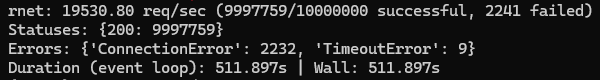

- The 10M requests submitted had some

AddrInUseerrors, not running again, this server is expensive lol. CPU usage was very low as well, idk what the bottleneck is then, will need to do more tests, maybe the ports, maybe i need to launch more clients...idk lol. - 10M requests in 8 minutes 😁